Plain text without markup visible

Text with markup made visible via CSS stylesheet

Background and introduction

This project is an initial foray meant to serve as a proof-of-concept for a larger (potential) dissertation project. As such, like a lot of digital humanities work, it is ongoing and unfinished. But it is at a point that I would like to make it public (so far as my blog is public) because it is at a good place for feedback. And because it is my final project for #f14tmn.

The overarching idea of the project is to explore the potentials of using markup (specifically, customized TEI) as the primary compositional method in a first-year writing course. First, I think that this method of composition will defamiliarize students’ notions of composing by changing their primary compositional tool from the word processor to an XML editor. I’d like to leverage this defamiliarization to underscore that all texts are mediated and situated in very specific ways. Second, I hope that having students apply markup (which is essentially metadata) to their texts will promote a metacognitive awareness of the (often implicit) rhetorical choices that are made during the composition process.

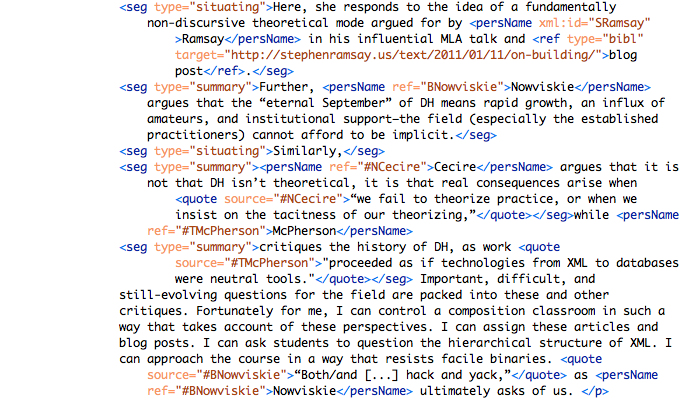

To begin this project, I’ve created a prototype (see above) using one of my own pieces of writing. Though the writing is about this project, the content of the document is not the concern here. Rather, the goals of the prototype are:

- To showcase a potential visual display of a marked up text.

- To inform the development of a foundational customized TEI schema.

- Develop TEI and CSS templates for use with undergraduates.

Theoretical framework

Briefly, there are three primary theoretical threads that inform this work. I’ve written about each of these more extensively on my blog, and I’ll link to these posts as appropriate, but for the sake of context I will rehearse some of my thoughts here.

First, conceiving markup as a kind of direct visualization; this idea is drawn from Lev Manovich’s description of “direct visualisation without sampling” (I have written about this here and here). The capabilities of digital technology are such that there may be ways to visualize materials without reducing them at all. When describing what direct visualization would look like without reduction/sampling, Manovich analogizes:

But you have already been employing this strategy [direct visualization] if you have ever used a magic marker to highlight important passages of a printed text. Although text highlighting is not normally thought of, we can see that in fact it is an example of “direct visualisation without sampling”. (44)

My contention is that visualization can be used as a tactical term to get students thinking productively about what text encoding affords. Further, the simplicity of highlighting is a good way to get students thinking about the ways they already add visual metadata to texts. This approach is evident in the marked up prototype, which is basically a more interactive version of a highlighted text.

The second theoretical thread that informs this project is critical making as a hybrid methodology that balances the role of theory and practice in the digital humanities (I worked through this debate in detail here). The key aspect I want to get at here is something Bethany Nowviskie asserts when talking about Neatline:

Neatline sees humanities visualization not as a result but as a process: as an interpretive act that will itself – inevitably – be changed by its own particular and unique course of creation. Knowing that every algorithmic data visualization process is inherently interpretive is different from feeling it, as a productive resistance in the materials of digital data visualization. So users of Neatline are prompted to formulate their arguments by drawing them.

The act of drawing is productive in a way that abstract thinking about drawing cannot be.

Finally, Wendall Piez’s concept of “exploratory markup” outlined in his brilliant article, “Beyond the ‘descriptive vs. procedural’ distinction” (in Markup Languages: Theory and Practice 3.2 (2001): 141-172). I have outlined Piez’s influence on my project here, but it is worth briefly noting his major contribution to my thinking. Piez ultimately argues that generic markup languages like TEI function as a kind of rhetoric about rhetoric:

One thing that does need to be observed here … is that in markup, we have not just a linguistic universe (or set of interlocking linguistic universes) but also a kind of “rhetoric about rhetoric.” That is, markup languages don’t simply describe “the world” — they describe other texts (that describe the world). (Piez 163)

This speaks to my aim to develop students’ metacognitive awareness through markup.

Previous projects

There are two projects that have influenced my approach. I have written about Trey Conatser’s XML-based first-year writing course at Ohio State here. And my discussion of Kate Singer’s use of TEI in a poetry course can be read here.

Here, it will suffice to mention my primary points of departure from these projects. First, instead of dictating the schema for writing assignments as Conatser does, I’d like to think about how building or adding to an emergent customized TEI schema as necessary tags arise can benefit the metacognitive awareness of students as they compose. Second, Singer’s ultimate investment in TEI is in the service of a traditional humanities methodology—close reading. The final project in her class was not a scholarly edition of poetry built in TEI, but rather a hermeneutical paper based around the close reading of texts. My approach necessarily differs, as I will be teaching a composition course rather than a poetry or literature course. I would like to retain the open and interpretive tagging that Singer’s approach employs while retaining the goal of metacognitive awareness that Conatser aims for.

It is also important to note that Singer’s approach to display way also influential—her class applied simple CSS stylesheets to their XML documents display.

Visualizing TEI

Again, in developing the CSS stylesheets, my goal was to create a template files that would be useful for students. I’ve included extensive comments (see below for example) to explain the functions of different sections and declarations. This will help students navigate the CSS stylesheets and teach them best practices for producing their own.

A major decision that I have wrestled with is the mechanism by which we make the XML documents visible. Below is a very condensed comparison of affordances and limitations of the three approaches I’ve considered: using XSLT to transform the XML to HTML, using CSS to style the XML directly, and using the TEI Boilerplate.

|

XML + XSLT |

XML + CSS |

TEI-BP |

|

|

|

Ultimately, for this prototype, I decided to style the XML directly via CSS. The main drawback I have run into with this approach is that the linking mechanisms in XML are not recognized by web browsers. As such, not of the references are currently linked, though URLs do populate the reference rollovers. I think the affordances of this approach—especially the ground-up approach and level of student involvement—outweigh the limitations. I plan to look into some light XSLT for the linking mechanisms to solve this problem.

In the prototype, I’ve used two stylesheets. One that simply structures the XML into an unmarked but formatted document and one that visualizes the markup via highlighting and rollovers. Since the XML documents are identical for both stylesheets, it would be easy for students to have more than two versions of their document. This faceted approach allows students to pull out certain aspects of the markup in specific versions of their documents.

Markup / custom schema

Since we are not using TEI Boilerplate, the question arises: why have the students use TEI at all? Why not just have them freely markup in XML and match the CSS to whatever tags they employ?

The benefits of creating a custom schema are two-fold. First, it allows me to constrain the elements we start with. This is a pragmatic concern, as I don’t want students to be overwhelmed by the sheer number of tags available to them. Second, a custom TEI schema is (functionally) infinitely extensible. This is important because I envision adding elements as their need arises during the class. This extensibility will allow for the type exploratory markup that Piez describes, while the in-class conversations that seek to formalize these exploratory tags will be productive in having students make explicit the implicit rhetorical choices they make in writing. These formalizations would not take place if all students were not validating to a common schema.

The groundwork for the customization is being laid with this prototype. The tags I’ve employed will be compared to the existing TEI schema to create an ODD file which will generate the customized schema. Most of the elements that will be added are currently tagged using <seg>, and differentiated with the type= attribute (example pictured below).

Through this exploratory tagging, I will build the constrained schema. The same method will be used for the expansion of the schema as needs arise. Students will use elements outside the schema and propose them for inclusion.

Next steps

As this project is developing, there are a few next steps on the immediate horizon that I’d like to mention here:

- Create constrained “foundation” schema based on the tags I’ve employed in the prototype.

- This will necessitate the creation of an ODD file

- I am attending a TEI customization workshop in January that will help in this regard

- Explore possibilities of XSLT to address issues with directly styling the XML with CSS

- Linking will help with citations and cross-references in document

- Linking will allow for a more seamless integration of multiple stylesheets (rather than multiple links from one HTML page)

- Explore XML + CSS compatibility with Omeka